For small websites with a few dozen pages, Googlebot’s crawling behavior is rarely a concern. You publish a page, it gets crawled, and within a few days, it appears in the index. However, when you scale your organic footprint to 5,000, 50,000, or 500,000 pages using programmatic SEO, faceted navigation, or massive localized service hubs, you hit a hard mathematical wall: Crawl Budget.

If Googlebot spends its allocated time and resources crawling low-value URLs, infinite redirect loops, or painfully slow server responses, it will abandon your site before discovering your most critical, revenue-generating pages. You will watch helplessly as Google Search Console fills up with "Discovered - currently not indexed" and "Crawled - currently not indexed" errors.

This comprehensive, highly technical guide breaks down exactly how crawl budget works in 2026, how to audit your server logs to see what Googlebot is actually doing, and the precise architectural changes required to get thousands of programmatic pages indexed rapidly.

What is Crawl Budget?

Crawl budget is not a single metric you can check in a dashboard. It is a conceptual framework that Google uses to determine how many URLs it will crawl on your site during a specific timeframe.

According to Google's official engineering documentation, crawl budget is determined by two primary factors:

- Crawl Capacity Limit (Server Health): How many simultaneous connections your server can handle without degrading the experience for human users. If your server response time (TTFB) spikes when Googlebot hits it, the bot will immediately back off and reduce its crawl rate.

- Crawl Demand (URL Value): How much Google wants to crawl your site. This is based on your site's overall popularity (PageRank, backlinks, brand authority) and the staleness of your content. If you frequently publish high-quality, entity-rich content that satisfies search intent, demand increases. If you publish thousands of thin, duplicate programmatic pages, demand plummets.

When you launch a large programmatic SEO build—like 5,000 city-specific service pages—you are suddenly demanding massive crawl resources from Google without having the established PageRank to justify it. This is where technical optimization becomes your only lever.

1. Technical Performance: The Crawl Capacity Limit

The most direct way to increase your crawl budget is to make your site faster. Googlebot operates on a strict time budget. If it allocates 10 seconds to crawl your site, and your Time to First Byte (TTFB) is 2 seconds per URL, it can only crawl 5 pages. If your TTFB is 200 milliseconds, it can crawl 50 pages in that same window.

The Problem with Dynamic Servers (WordPress & SSR)

If your site relies on a traditional LAMP stack (Linux, Apache, MySQL, PHP) like WordPress, every time Googlebot requests a URL, the server must query the database, process the PHP template, and generate the HTML. When Googlebot discovers a new XML sitemap with 5,000 URLs and tries to crawl them simultaneously, the database CPU spikes. The TTFB jumps from 500ms to 4,000ms. Googlebot detects the strain, flags your server as struggling, and drastically reduces its crawl rate for the next month.

The Static Site Generation (SSG) Solution

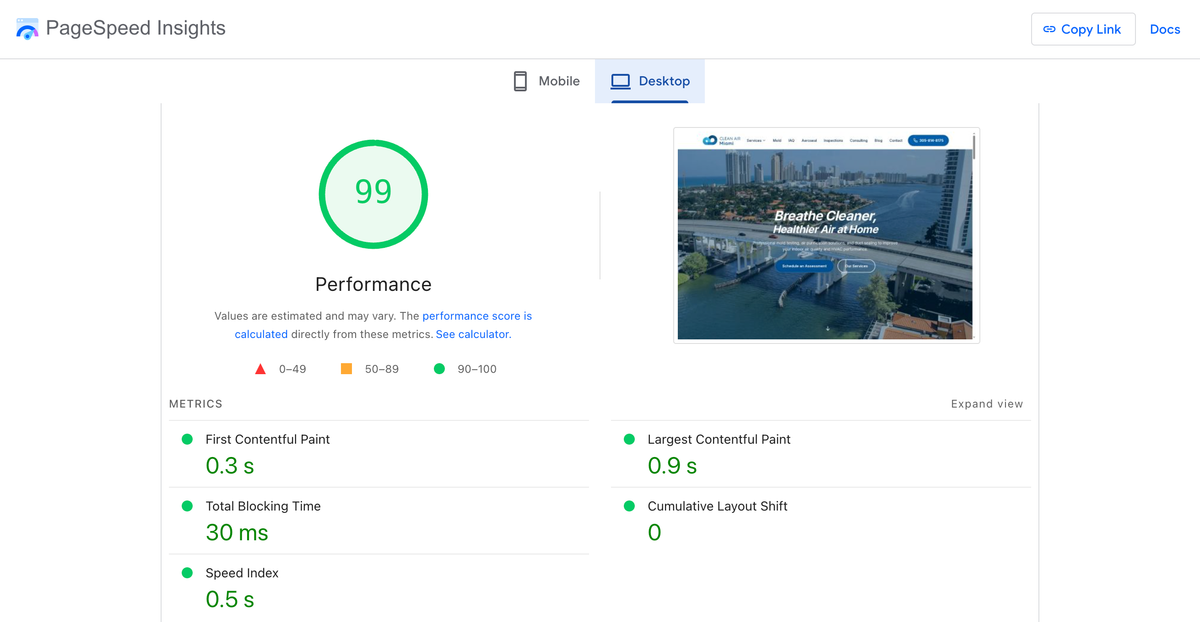

This is why enterprise SEO demands Static Site Generation (SSG). With platforms like AiPress, your 5,000 programmatic pages are pre-built into static HTML files and distributed globally via a Content Delivery Network (CDN) like Cloudflare or Vercel.

When Googlebot requests these pages, there is no database query. There is no server-side rendering. The CDN instantly serves the static file with a TTFB of under 50 milliseconds. Googlebot can ingest your entire 5,000-page architecture at maximum speed without ever triggering a capacity limit warning.

For a deeper dive into how client-side rendering bottlenecks this process even further, read our guide on Why Client-Side Rendering Destroys Your Rankings. Additionally, understand how rendering performance limits indexing in Core Web Vitals and Crawl Rate.

2. Server Log File Analysis: Seeing Through Googlebot's Eyes

Google Search Console's "Crawl Stats" report provides a helpful overview, but it is heavily sampled and delayed. To truly optimize crawl budget, you must analyze your raw server access logs.

Extracting and Filtering the Logs

Your server logs (usually found in /var/log/nginx/access.log or via your CDN provider's dashboard) record every single hit to your website. You need to filter these logs to isolate verified Googlebot user agents.

Warning: Many malicious bots spoof the Googlebot user agent. You must run a reverse DNS lookup to verify the IP address actually belongs to googlebot.com or google.com.

What to Look For in the Data

Once you have parsed the verified Googlebot hits over a 30-day period, analyze the data for the following crawl traps:

- The 301 Redirect Chain Drain: If Googlebot requests URL A, which 301 redirects to URL B, which 301 redirects to URL C, you have wasted three "crawl credits" to discover one piece of content. Log files will expose legacy redirects you forgot existed. Every internal link on your site must point to the final, 200 OK destination URL.

- The 404 Error Black Hole: If Googlebot is spending 20% of its crawl budget hitting 404 pages, you have a massive structural problem. Often, these are old URLs linked from legacy blog posts or external sites. You must implement 301 redirects for high-value 404s and let low-value 404s return a 410 (Gone) status code to tell Googlebot to permanently stop requesting them.

- Faceted Navigation Infinite Spaces:

E-commerce and real estate sites often use faceted navigation (e.g.,

?color=red&size=large&sort=price_desc). If these parameters are exposed to Googlebot via standard<a>tags without proper canonicalization orrobots.txtdisallow rules, Googlebot will generate millions of unique URLs and spend its entire crawl budget indexing identical product grids. - Parameter Bloat:

UTM tracking parameters (

?utm_source=facebook), session IDs, or affiliate tracking codes should never be internally linked. If they are, Googlebot treats them as distinct URLs.

3. Mastering XML Sitemaps for Large Architectures

A single sitemap.xml file is limited to 50,000 URLs or 50MB uncompressed. However, dumping 50,000 URLs into a single file is a terrible SEO practice for diagnostic purposes.

The Index Sitemap Strategy

You must break your XML sitemaps down into logical, granular components using a Sitemap Index file. This allows you to isolate indexing problems within Google Search Console.

Instead of one massive file, split your architecture:

sitemap-pages.xml(Core service pages, about, contact)sitemap-blog.xml(Content marketing and informational posts)sitemap-locations-tx.xml(Programmatic pages for Texas)sitemap-locations-ca.xml(Programmatic pages for California)

If you see that your Texas locations have a 95% indexation rate, but your California locations only have a 20% indexation rate, you immediately know where to focus your technical audit.

The <lastmod> Tag Requirement

In 2026, Google has explicitly stated that they rely heavily on the <lastmod> (Last Modified) attribute in XML sitemaps to determine crawl priority. If you update a page, you must update its <lastmod> date. If you dynamically generate fake <lastmod> dates (e.g., setting them all to the current date every night), Googlebot will realize you are lying and permanently ignore your <lastmod> signals, crippling your crawl efficiency.

At AiPress, our static generation pipeline automatically injects the precise Git commit timestamp into the <lastmod> tag during the build process, ensuring 100% accuracy and maximum crawl trust.

4. Fixing "Discovered - Currently Not Indexed"

This is the most common and frustrating error in Google Search Console for programmatic SEO sites. It means Google knows the URL exists (usually because it found it in a sitemap), but the crawl queue was too overloaded to actually visit the page.

To resolve this:

- Reduce Server Load: Transition to a static architecture or aggressive edge caching.

- Prune Thin Content: If you launched 5,000 city pages, but they all feature the exact same spun text with only the city name swapped out, Google's algorithms will detect the boilerplate patterns during the first few crawls. It will then intentionally throttle the crawling of the remaining 4,900 pages because it predicts they will also be low-value. You must inject unique, entity-rich data into every programmatic template.

- Internal Linking Power: A URL sitting in an XML sitemap with zero internal links pointing to it is considered an "Orphan Page." Googlebot prioritizes crawling pages that are heavily linked from within your site hierarchy. If a page is important enough to index, it must be linked contextually. Learn how to architect these links in our guide on Internal Linking Architecture for SEO Hubs.

5. Fixing "Crawled - Currently Not Indexed"

This error is even worse. It means Googlebot successfully crawled the URL, rendered the HTML, and then decided the content wasn't good enough to put in the index.

This is almost always a content quality or canonicalization issue, not a crawl budget issue.

- Cannibalization: The page is too similar to another page already in the index.

- Thin Content: The page lacks sufficient word count, unique entities, or semantic depth to satisfy search intent.

- Rendering Failures: The page relies heavily on CSR, and the crucial content failed to load during the rendering phase.

If you are facing this issue across thousands of programmatic pages, you cannot fix it by submitting the URLs again. You must redesign the page template to include richer data, schema markup, and unique local entities, then wait for Googlebot to recrawl the updated architecture.

6. Strategic Use of Robots.txt

Your robots.txt file is your primary weapon for defending your crawl budget. It allows you to explicitly block Googlebot from wasting time on low-value sections of your site.

What to Block:

- Internal search result pages (e.g.,

Disallow: /search?q=) - Faceted navigation parameters (e.g.,

Disallow: /*?filter=) - Admin portals, login pages, and user account dashboards.

- API endpoints (if they are being exposed to crawlers).

- Staging and development subdomains.

Crucial Note: Do not block CSS, JavaScript, or image files in your robots.txt. As discussed in the Two Waves of Indexing, Googlebot must render your page to understand its layout and mobile-friendliness. If you block the assets required to render the page, Googlebot will see a broken, unstyled mess and penalize your rankings.

Conclusion: Scale Requires Discipline

You cannot brute-force your way into the Google index. Launching 5,000 pages on a slow, dynamic server with poor internal linking and spun content will result in massive server costs and zero organic traffic.

Optimizing crawl budget requires ruthless technical discipline. You must serve static, lightning-fast HTML. You must eliminate redirect chains and 404 errors. You must architect a logical XML sitemap structure backed by accurate <lastmod> data, and you must protect your server resources using strict robots.txt rules.

By respecting Googlebot's resources and providing an frictionless crawling experience, you ensure that every programmatic page you launch is rapidly discovered, deeply indexed, and positioned to drive revenue.

Ready to transform your WordPress site?

Get a free preview of your site as a fast, modern site.

Free preview